Neuron surgery: Why security needs AI interpretability

🧠🕵️♂️ Malware is now asking Gemini to rewrite itself mid-execution. Your AV has no idea. My thoughts why interpretability is the next frontier in cyber as we ship blackbox brains into production.

Some fascinating security research this week made me think about why AI interpretability and explainability will be the next frontier for cybersecurity. Understanding what really happens under the hood in the language model and why it predicts those exact tokens isn’t just academic curiosity or benchmark chasing anymore.

Alphabet’s favourite advertisement company has now identified a couple of interesting malware samples in the wild with just-in-time self-modification. We are talking about adaptive malicious software that hides its signature from antivirus by changing itself. It is effectively asking an AI assistant during runtime to generate a few different code variations for the stages such as persistence or data exfiltration. The AI (currently called via APIs) writes a new shell script, which the malware then executes, generating fresh variations of itself to avoid detection.

Reverse engineering obfuscated binaries and return oriented programming was already an art form. Now imagine having a non-deterministic language model’s inference results sitting directly in your execution path. This can turn your malware research into actual neuron surgery.

Think like an attacker for a moment

For now language models as part of an application’s workflow are mostly called via API or wrapped in popular frameworks like LangChain or LlamaIndex, to abstract away (or sometimes increase) their complexity. We’ll reach a point however where cost of tokens drops so far, it becomes inexpensive and much faster to run small models lower down in the stack and locally or at the edge.

Take your favourite tiny open-weight coding assistant, a small language model in the sub-billion parameter range.

Strip it down, and fine-tune to generate simple mutations of some key malware primitives and building blocks.

Add it to your malware’s control flow to self-obfuscate and adapt to different environments.

Slip it into your target’s software supply chain, and make it act benign completing trivial tasks until it is running in the target environment.

When the right environment or input prompt is detected, nudge the control flow against the target.

This does not require nation state level sophistication anymore.

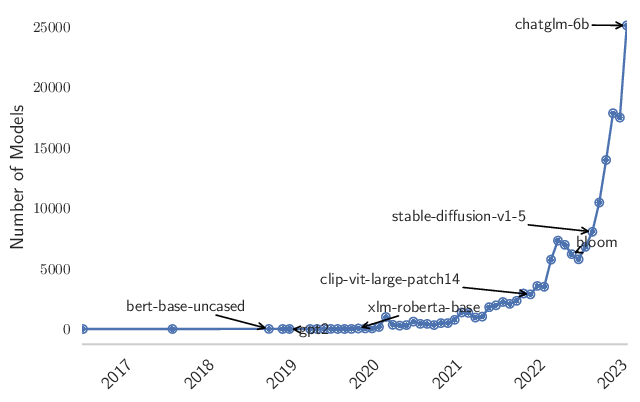

Hugging Face is already becoming your new Github with steep increase in uploaded models, and soon AI at the edge will be the new OS paradigm. Does your threat model mention model provenance or model Bill of Materials? How do you cover your AI supply chain?

LLMs are your new non-deterministic OS

LLMs are already the new genre of OS and they are sweeping through our stack on many layers. The smaller self-hosted, on-device or on-edge models are the likely future for simpler general utility, as the more expensive frontier models of big tech will be aimed at more complex tasks. A lot of weight will be put on the shoulders of those smaller self-hosted models embedded in different applications. There is a point where we’ll start to drive more runtime execution flows directly into these, and it raises the question:

With a language model operating at its core, how do you tell the difference between a benign and a malicious application?

Your statistical analysis will probably only get as far as: “this app is using AI”

Today looking at applications we can only tell it if it has embedded AI or not, and that’s about it. The frontier labs won’t even tell you what data composition their models were trained on, and give very limited monitoring capability on the usage surface at best, everything else under the hood is a black box, with limited reasoning exposed.

Using open-weight models gives you more control, but we know from jailbreak research that all models can have dark thoughts buried in their weights, luring them out is just a matter of prompting them the right way. Their training data is effectively the whole internet. How do you tell if a model is aligned to your values, and isn’t tuned to activate a sleeper agent when it hears the right trigger word?

How do you know your app isn’t biased towards one supplier’s endpoint versus another?

Your behavioural analysis tool will likely sleep on it too

The (not so new) trend is to look at behaviour instead of signatures and fingerprints. Approaches will look at anomalies and deviations from a normal baseline. There’s a significant increase in variation however when you see non-deterministic “multi-agent” configurations at play. The line is much blurrier between a mistake and malice in action. Your compliance API will cover your input and output, but no internal reasoning or detailed decision logs will explain why something got blocked by a safety filter.

If you decide to self-host or even train or fine-tune your own models, a new wide range of controls open up. It is a much broader range of responsibilities on safety and security evaluations with limited tooling available.

A few open-source tools are now emerging in the model editing and ablation space. These help you modify model behavior, as I covered in my previous post. Their focus is on censorship removal for now. Heretic - released recently - can even automate this process. Stripping the model’s ability to refuse requests is one thing, but if you adapt these techniques for other behavior changes the question becomes: How do you x-ray a model’s thought process?

How do you run a “background check” on your AI agent?

This is a fascinating new field with a lot of work ahead. Mechanistic interpretability and explainability are still in their infancy, with some promising early utilities like the open source circuit tracer from Anthropic that let you tap into the model’s thoughts - not test time or chain of thought, but token prediction paths directly from the neural net. For now, analysing these are very laborious and expensive, so you have to handpick your investigations carefully.

Even after passing a background check, your model could always be turned into a bad actor by the right jailbreak. Runtime behaviour monitoring and interpretability will be even more important if you have a lot of firepower, like frontier labs do. You want to understand which neurons and paths trigger in your artificial genius’s brain, so you can catch abuse early, as models already get second thoughts and we now know they can even cheat our benchmarks.

Swarms of malicious agents with state-of-the-art coding capability

Anthropic’s latest report on espionage using Claude shows their models being abused to aid attackers in automating full attack workflows, with enumeration, exploit development, persistence, data collection and exfiltration stages all done by the mighty coding assistant in a few hours. That means thousands of requests executed by hundreds of agents working asynchronously, delivered human-level targeting at the speed of a machine.

Picture the SOC that needs to respond not just to phishing, credential stuffing or script kiddie attempts with noisy fuzzers, but to swarms of state-of-the-art AI agents carefully analyzing and attacking your systems with constantly evolving heuristics. Now add unanalyzable black box components that look benign but behave maliciously.

Have you built these scenarios into your threat model?

Today most common AI use cases can be covered with existing cybersecurity controls and a sensible DLP strategy, but when you open up the lid on the model’s inner workings and see cheap tokens accelerate reliance on LLMs for daily computation tasks, a whole new range of risks emerge that we don’t have the right interpretability tooling for.

Some low-hanging fruit

While interpretability improves there are steps you can take now:

Threat model your AI use cases from employee, developer and customer perspectives.

Lean on existing controls first - the data control problem isn’t new or AI specific.

Set up an AI evaluation pipeline so you can quickly trial new models and APIs and compare them across quality, reliability, cost and risk - as new paradigm shifts arrive.

The proof is in the pudding - adopt language models in your own operations, so you actually experience their benefits (and failures) first-hand.

Measure the impact of AI adoption on both risk and productivity, not just cost savings.

References

https://cloud.google.com/blog/topics/threat-intelligence/threat-actor-usage-of-ai-tools

https://www.anthropic.com/news/disrupting-AI-espionage

https://github.com/p-e-w/heretic

https://www.anthropic.com/research/open-source-circuit-tracing